Uncertainty in Explainable Artificial Intelligence (PhD Thesis)

Teodor Chiaburu

Defended with grade "very good" on the 10th of March at the Einstein Center for Digital Future, Berlin

Download thesis here.

Why walk when you can dance?

Hi there! My name is Teo. I am a Data Science graduate and as of March 2026 I have just finished my PhD at Berliner Hochschule für Technik and Technische Universität Berlin. My work has mainly focused on Explainable AI (XAI) and operationalizing the concept of Uncertainty in XAI (UXAI). During my PhD, I gained extensive experience with real-world data and building trustworthy and robust multimodal Machine Learning pipelines for environmental applications. Whenever I'm not working, I'm either dancing or teaching Salsa Cubana, playing table tennis or cooking a delicious meal :^)

Uncertainty in Explainable Artificial Intelligence (UXAI)

Under the supervision of Prof. Dr. Felix Bießmann (BHT), Prof. Dr. Frank Haußer (BHT) and Prof. Dr. Benjamin Blankertz (TU Berlin).

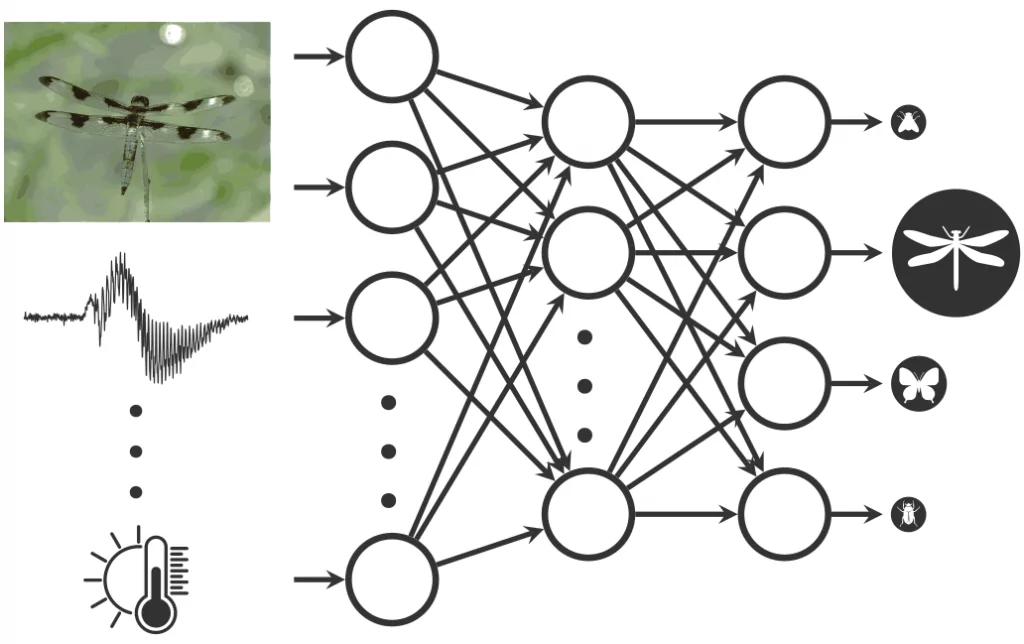

Responsible for developing and validating ML models (in Python, TensorFlow) for recognizing and hierarchically classifying insect species based on their wingbeat frequencies (see project page).

Responsible for developing and applying standard ML methods in C++ for classifying iron particles on microscopic images (see website).

Department for 3D-Printing: written a web app in JavaScript and PHP for supervising the 3D-printing process.

Download thesis here.

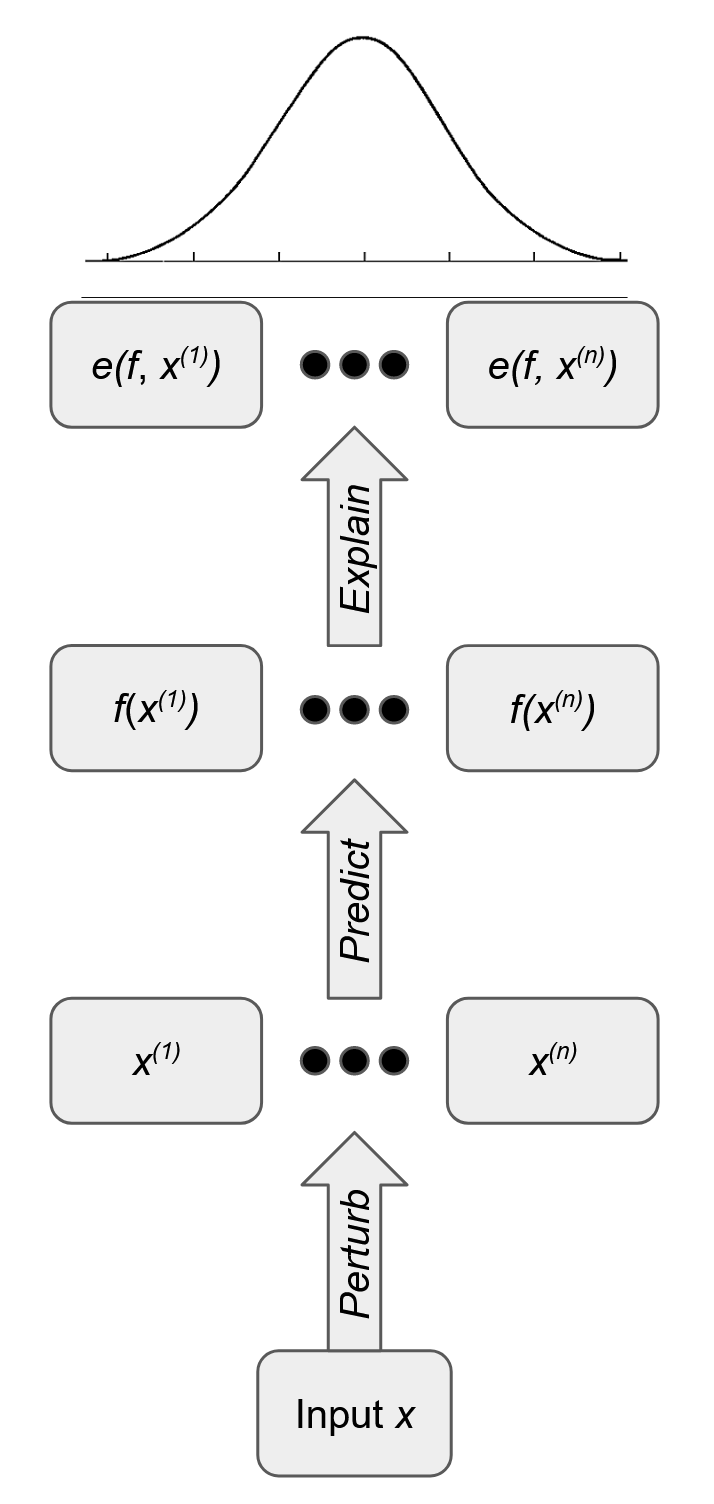

We propose concrete implementations for the correctness, consistency, continuity, and compactness properties to evaluate uncertinty attributions. Additionally, we introduce conveyance, a property tailored to uncertainty attributions that evaluates whether controlled increases in epistemic uncertainty reliably propagate to feature-level attributions. We demonstrate our evaluation framework with eight metrics across combinations of uncertainty quantification and feature attribution methods on tabular and image data. Although most metrics rank the methods consistently across samples, inter-method agreement remains low. This suggests no single metric sufficiently evaluates uncertainty attribution quality. Download paper here.

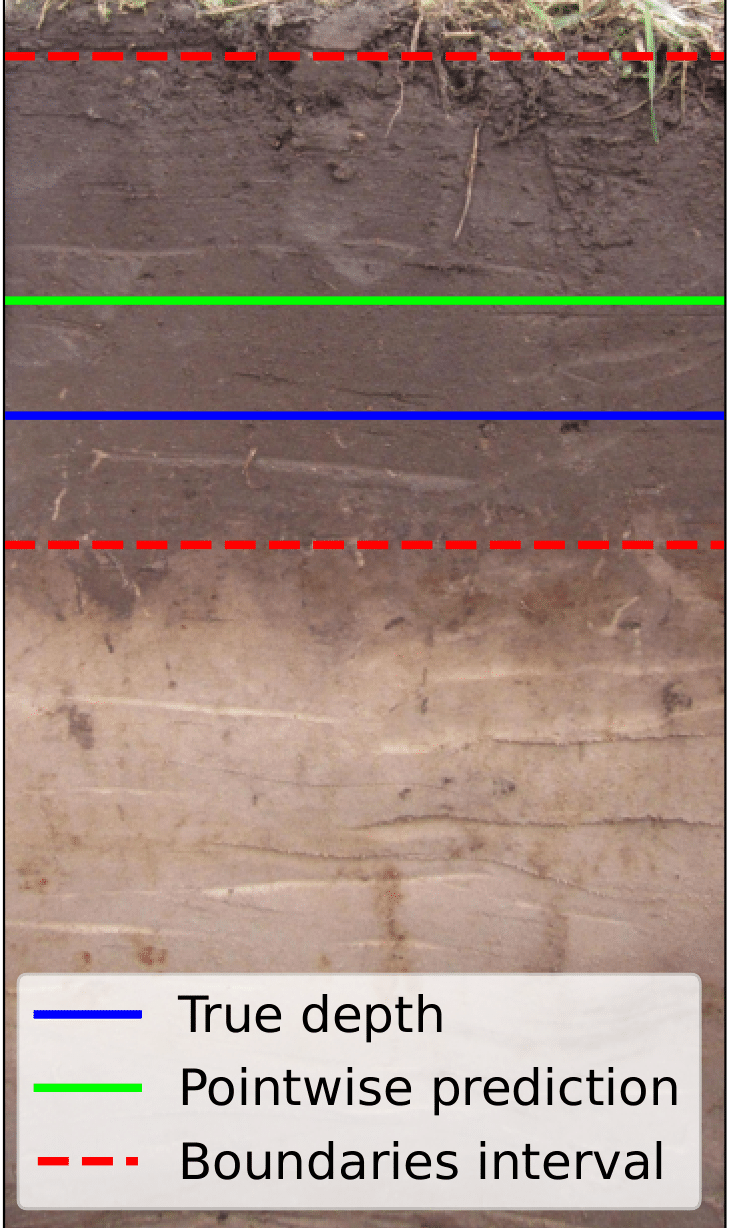

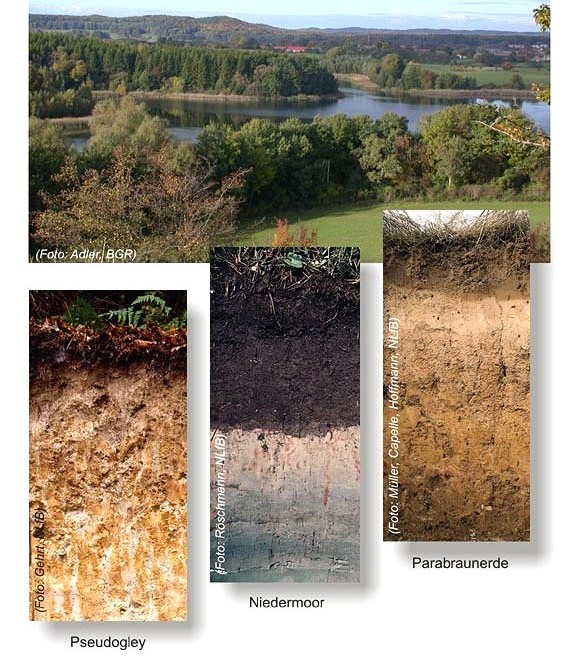

In this work, we apply conformal prediction to SoilNet, a multimodal multitask model for describing soil profiles. We design a simulated human-in-the-loop (HIL) annotation pipeline, where a limited budget for obtaining ground truth annotations from domain experts is available when model uncertainty is high. Our experiments show that conformalizing SoilNet leads to more efficient annotation in regression tasks and comparable performance scores in classification tasks under the same annotation budget when tested against its non-conformal counterpart. Download paper here.

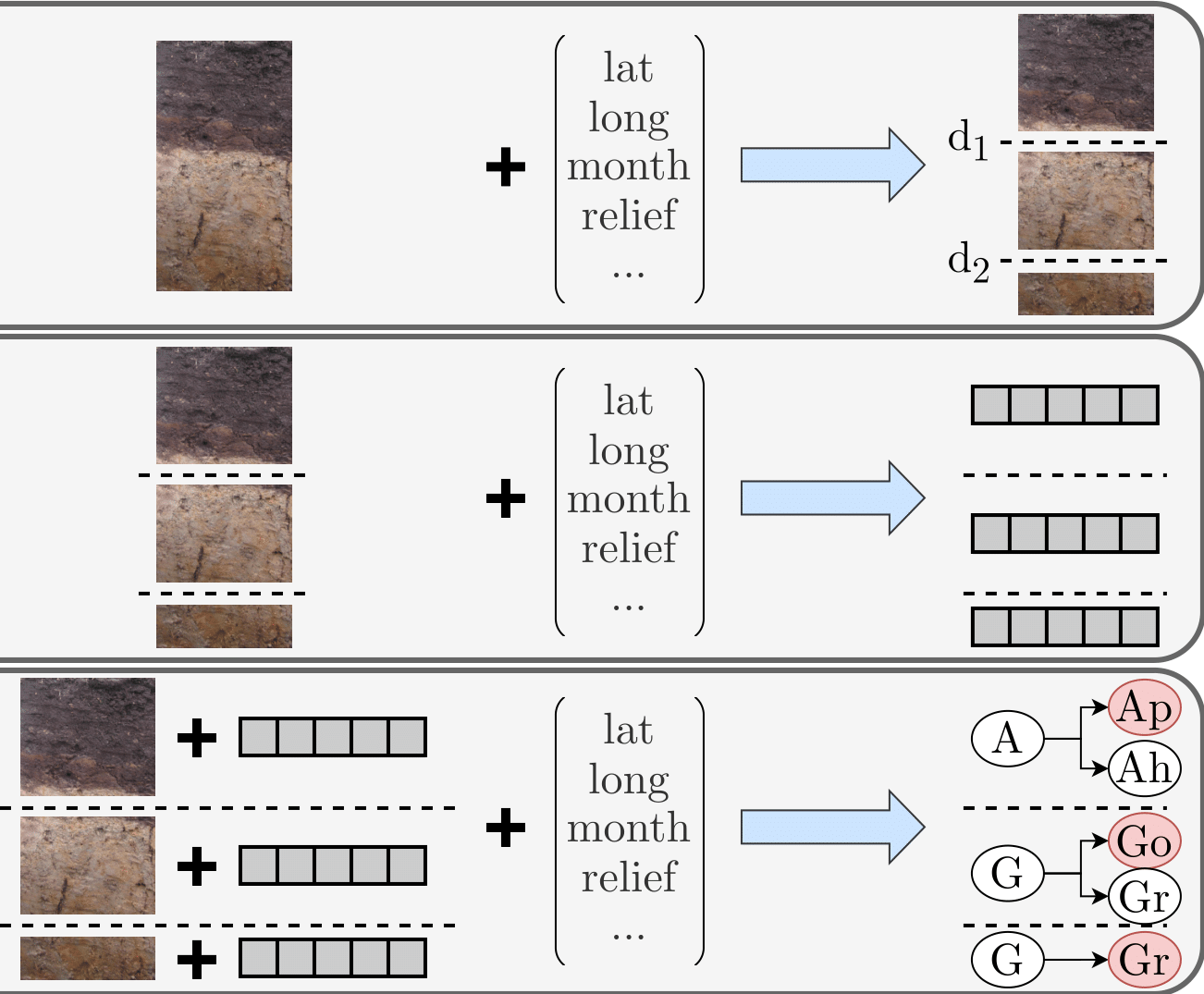

In this work, we propose SoilNet - a multimodal multitask model to tackle this problem through a structured modularized pipeline. Our approach integrates image data and geotemporal metadata to first predict depth markers, segmenting the soil profile into horizon candidates. Each segment is characterized by a set of horizon-specific morphological features. Finally, horizon labels are predicted based on the multimodal concatenated feature vector, leveraging a graph-based label representation to account for the complex hierarchical relationships among soil horizons. Our method is designed to address complex hierarchical classification, where the number of possible labels is very large, imbalanced and non-trivially structured. We demonstrate the effectiveness of our approach on a real-world soil profile dataset. All the code and experiments can be found in our repository. Download paper here.

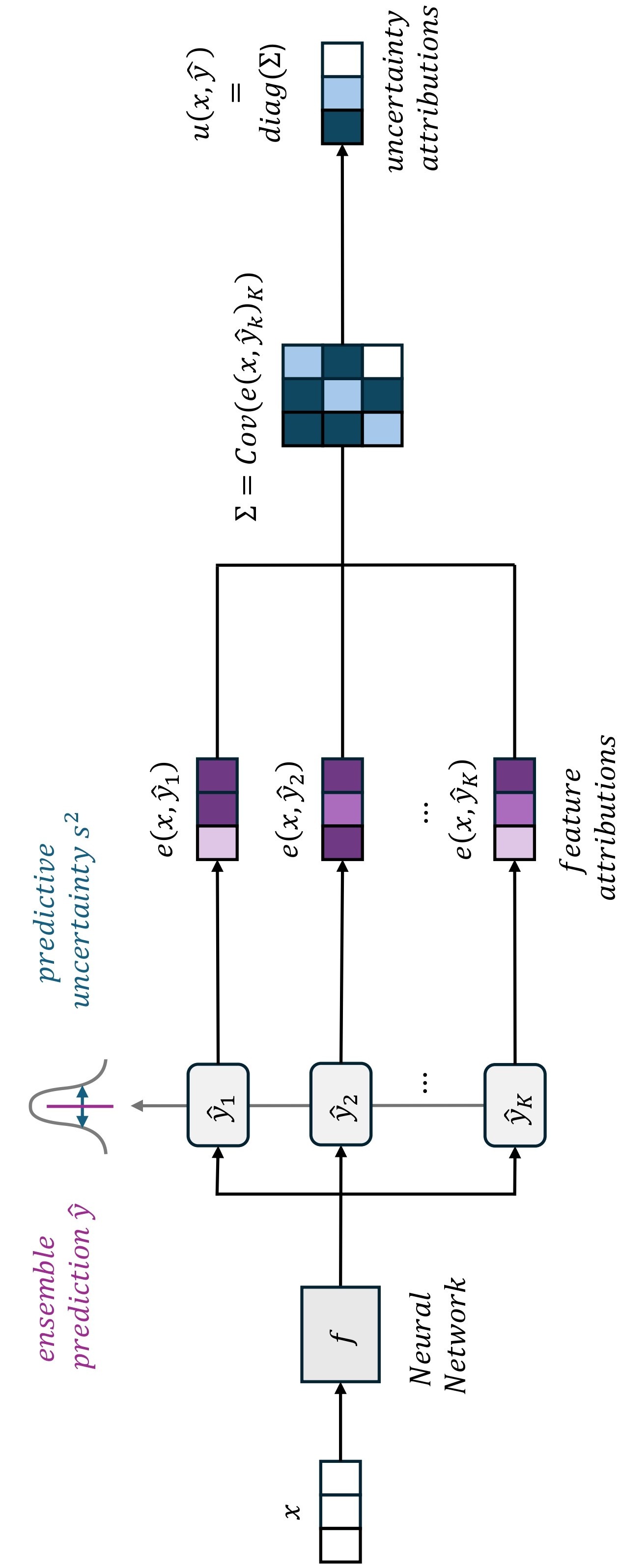

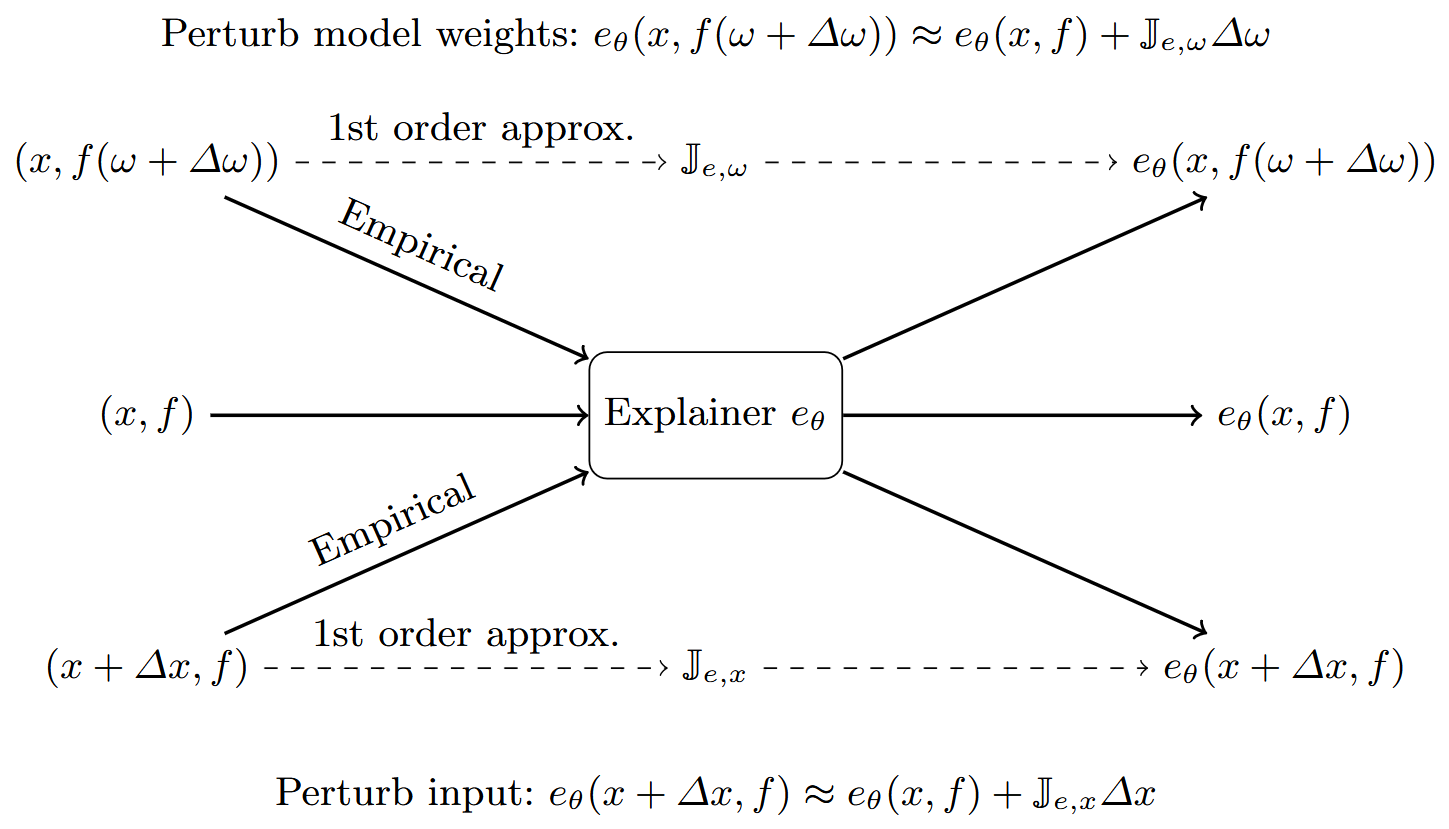

This paper introduces a framework to measure uncertainty in XAI explanations, which stems from input data and model parameter variations. We use analytical and empirical methods to estimate how this uncertainty affects explanations and evaluate their robustness across different datasets. Our study identifies XAI methods that don't reliably handle uncertainty, emphasizing the need for uncertainty-aware explanations in critical applications and revealing limitations in current XAI techniques. All the code and experiments can be found in our repository. Download paper here.

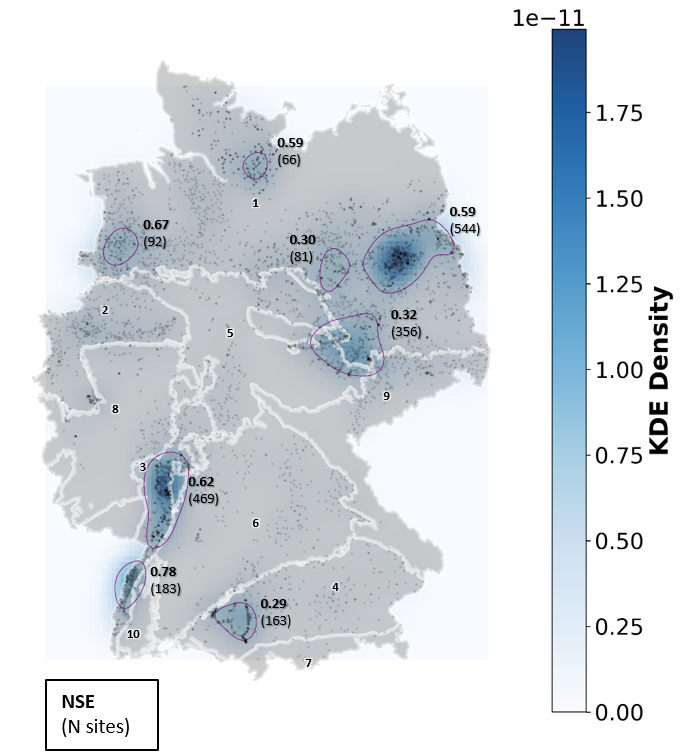

Accurate groundwater level predictions are essential for a sustainable groundwater management. This study applies two machine learning (ML) models—N-HiTS and TFT—to seasonally predict groundwater levels for 5,288 monitoring wells across Germany. Both approaches provided good predictions across diverse hydrogeological conditions, whereby N-HiTS outperformed the TFT. Both models showed better perforance in areas with high data density, in lowlands, and when distinct seasonal dynamics occurred. Download paper here.

We propose a protocol for evaluating neural network architectures for combined sewer systems time series forecasting with respect to predictive performance, model complexity and robustness to perturbations. In addition, we assess model performance on peak events and critical fluctuations, as these are the key regimes for urban wastewater management. To investigate the feasibility of lightweight models suitable for IoT deployment, we compare global models, which have access to all information, to local models, which rely solely on nearby sensor readings. Download paper here.

We present a comprehensive empirical evaluation of several state-of-the-art time series models for predicting sewer system dynamics in a large urban infrastructure, utilizing three years of measurement data. We especially investigate the potential of Deep Learning models to maintain predictive precision during network outages by comparing global models, which have access to all variables within the sewer system, and local models, which are limited to data from a restricted set of local sensors. Download paper here.

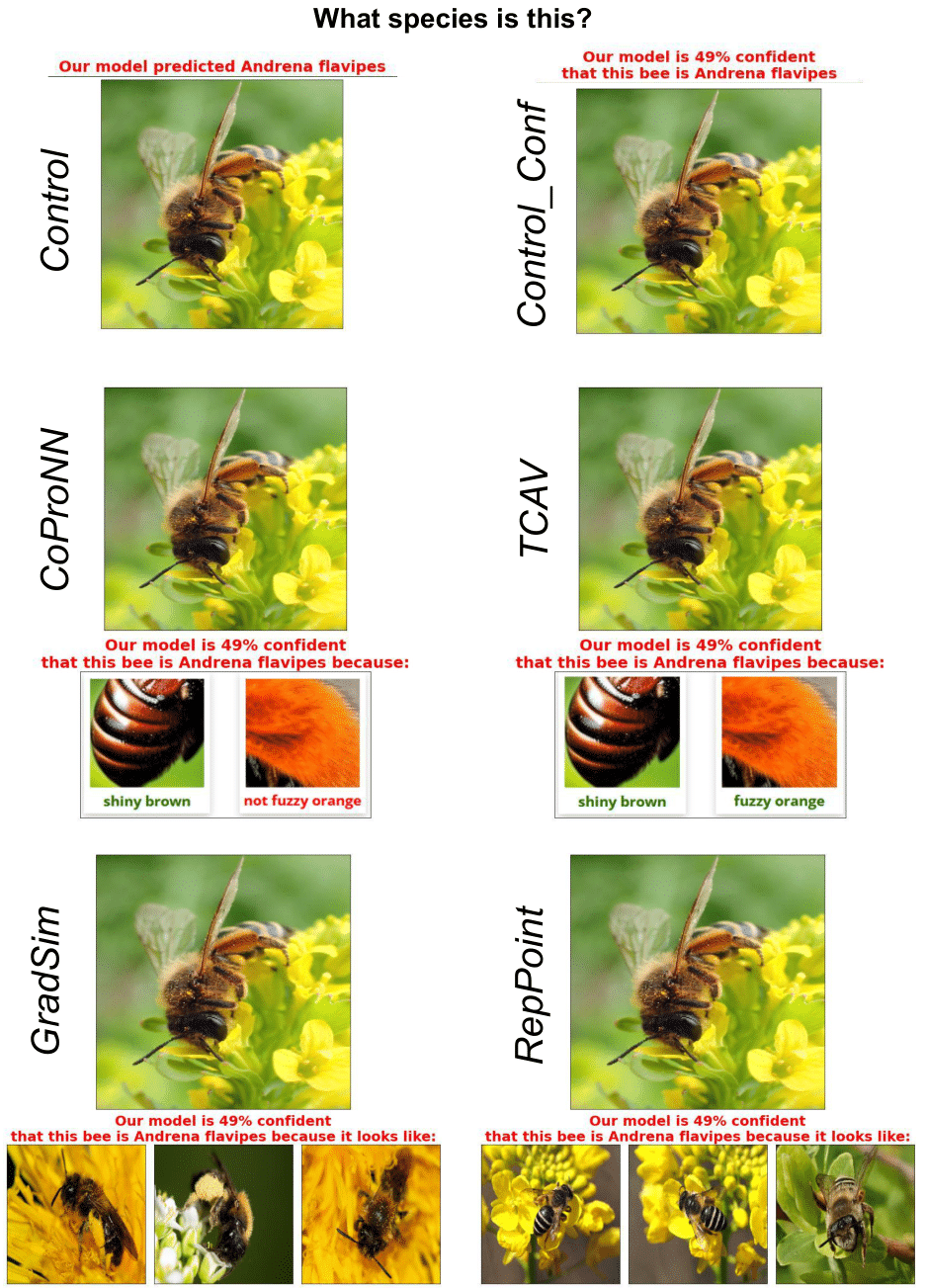

We propose an experimental design to evaluate the potential of XAI in human-AI collaborative settings as well as the potential of XAI for didactics. In a user study with 1200 participants we investigate the impact of explanations on human performance on a challenging visual task - annotation of biological species in complex taxonomies. Download paper here.

We review established and recent methods to account for uncertainty in ML models and XAI approaches and we discuss empirical evidence on how model uncertainty is perceived by human users of XAI systems. We summarize the methodological advancements and limitations of methods and human perception. Finally, we discuss the implications of the current state of the art in model development and research on human perception. We believe highlighting the role of uncertainty in XAI will be helpful to both practitioners and researchers and could ultimately support more responsible use of AI in practical applications. Download paper here.

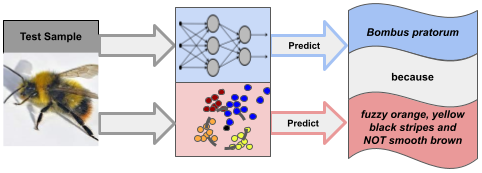

We present a novel approach that enables domain experts to quickly create concept-based explanations for computer vision tasks intuitively via natural language. Leveraging recent progress in deep generative methods we propose to generate visual concept-based prototypes via text-to-image methods. These prototypes are then used to explain predictions of computer vision models via a simple k-Nearest-Neighbors routine. The approach can be evaluated offline against the ground-truth of predefined prototypes that can be easily communicated also to domain experts as they are based on visual concepts. All the code and experiments can be found in our repository. Download paper here.

In this work we specifically address the problem of sewage water polluting surface water bodies after spilling over from rain tanks as a consequence of heavy rain events. We investigate to what extent state-of-the-art interpretable time series models can help predict such critical water level points, so that the excess can promptly be redistributed across the sewage network. Our results indicate that modern time series models can contribute to better waste water management and prevention of environmental pollution from sewer systems. All the code and experiments can be found in our repository. Download paper here.

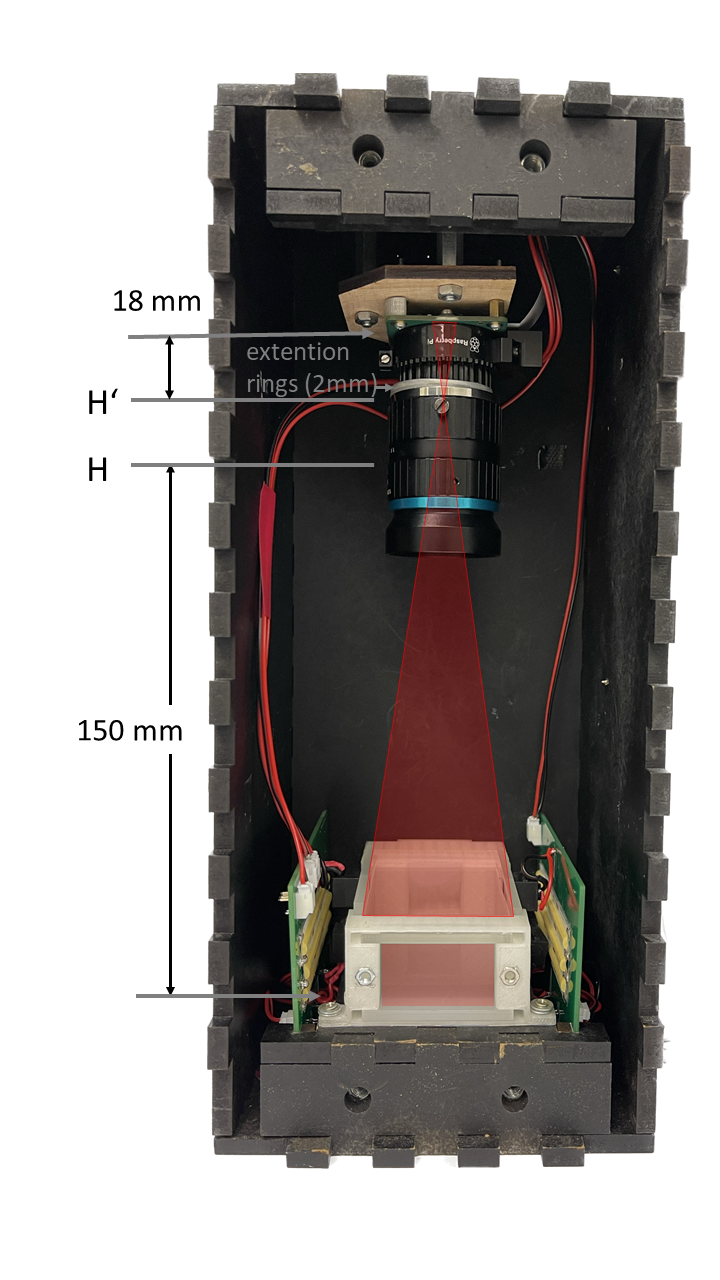

In this work, we present an imaging method as part of a multisensor system developed as a low-cost, scalable, open-source system that is adaptable to classical trap types. The image quality meets the requirements needed for classification in the taxonomic tree. Illumination and resolution have been optimized and motion artefacts suppressed. The system is evaluated exemplarily on a dataset consisting of 16 insect species of the same as well as different genus, family and order. We demonstrate that standard CNN-architectures like ResNet50 (pretrained on iNaturalist data) or MobileNet perform very well for the prediction task after re-training. Smaller custom-made CNNs also lead to promising results. Download paper here.

This paper presents a multisensor approach that uses AI-based data fusion for insect classification. The system is designed as low-cost setup and consists of a camera module and an optical wingbeat sensor as well as environmental sensors to measure temperature, irradiance or daytime as prior information. Download paper here.

Classification and XAI Experiments on a dataset of wildbee photos. Visit repository (link to paper, poster and dataset there).

Localizing and classifying soil horizons in multimodal input (visual and tabular). Visit BGR website.

Investigating interpretability in ML neural networks trained to recognize wildbee species on images. Visit KInsecta website.

Reduction of the Impact of untreated Waste Water on the Environment in case of torrential Rain

Visit project website.